Example 53: Hybrid feature selection. More...

#include <boost/smart_ptr.hpp>#include <exception>#include <iostream>#include <cstdlib>#include <string>#include <vector>#include "error.hpp"#include "global.hpp"#include "subset.hpp"#include "data_intervaller.hpp"#include "data_splitter.hpp"#include "data_splitter_cv.hpp"#include "data_splitter_randrand.hpp"#include "data_scaler.hpp"#include "data_scaler_to01.hpp"#include "data_accessor_splitting_memTRN.hpp"#include "data_accessor_splitting_memARFF.hpp"#include "criterion_normal_bhattacharyya.hpp"#include "criterion_wrapper.hpp"#include "classifier_svm.hpp"#include "seq_step_hybrid.hpp"#include "search_seq_sfs.hpp"

Functions | |

| int | main () |

Example 53: Hybrid feature selection.

| int main | ( | ) |

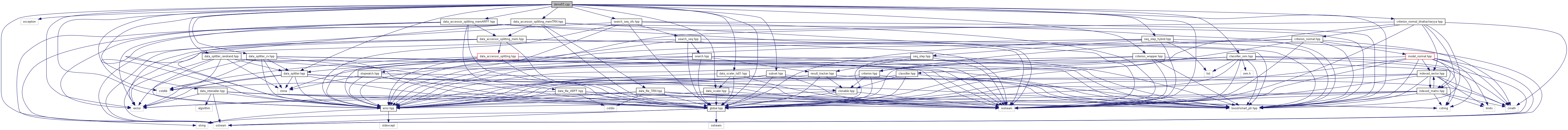

Selects features using hybridized SBS algorithm where the primary criterion is SVM wrapper estimated classification accuracy and the pre-filtering criterion is normal Bhattacharyya distance. In each algorithm step all current feature subset candidates are evaluated by means of Bhattacharyya, 50% of the worst is abandoned and only the remaining 50% are evaluated by means of the slow SVM wrapper to select intermediate solutions. 50% of data is randomly chosen to form the training dataset (remains the same for all the time), 40% of data is randomly chosen to be used at the end for validating the classification performance on the finally selected subspace. (selected training and test data parts are disjunct and altogether cover 90% of the original data). SBS is called in d-optimizing setting, invoked by parameter 0 in search(0,...), which is otherwise used to specify the required subset size.

References FST::Search_SFS< RETURNTYPE, DIMTYPE, SUBSET, CRITERION, EVALUATOR >::search(), FST::Search< RETURNTYPE, DIMTYPE, SUBSET, CRITERION >::set_output_detail(), and FST::Search_SFS< RETURNTYPE, DIMTYPE, SUBSET, CRITERION, EVALUATOR >::set_search_direction().

1.6.1

1.6.1